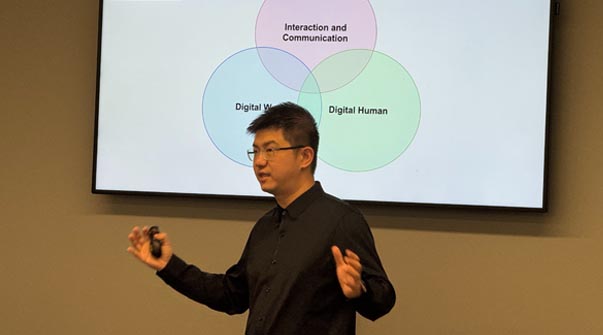

Augmented Communication for a Universally Accessible Metaverse

Ruofei Du

Invited Talk at UMD by Dr. Varshney , College Park, Maryland.

pdf |

Situationally Induced Impairments and Disabilities (SIIDs) can significantly hinder user experience in everyday activities. Despite their prevalence, existing adaptive systems predominantly cater to specific tasks or environments and fail to accommodate the diverse and dynamic nature of SIIDs. We introduce Human I/O, a real-time system that detects SIIDs by gauging the availability of human input/output channels. Leveraging egocentric vision, multimodal sensing and reasoning with large language models, Human I/O achieves good performance in availability prediction across 60 in-the-wild egocentric videos in 32 different scenarios. Further, while the core focus of our work is on the detection of SIIDs rather than the creation of adaptive user interfaces, we showcase the utility of our prototype via a user study with 10 participants. Findings suggest that Human I/O significantly reduces effort and improves user experience in the presence of SIIDs, paving the way for more adaptive and accessible interactive systems in the future.

Ruofei Du

Invited Talk at UMD by Dr. Varshney , College Park, Maryland.

pdf |